The programmatic supply chain is hemorrhaging budget, with billions of dollars vaporized while legacy verification vendors pat themselves on the back simply because a telemetry pixel fired successfully.

They see a DOM render and blindly log it as a human interaction. Fraudsters abandoned rudimentary scraping scripts a decade ago; today, they weaponize enterprise-grade browser automation to systematically strip-mine your campaigns while the broader ad-tech ecosystem remains comfortably oblivious.

The Illusion of JavaScript Verification

The ecosystem is dangerously complacent. Viewability vendors routinely slap “100% Valid Traffic” labels on sessions generated by headless Chrome containers. These nodes run across cheap, bulletproof ASNs like Choopa, DigitalOcean, or heavily abused M200 residential proxy networks.

You pay a premium for third-party verification. You think your traffic is clean. It is not.

The core architectural failure of modern ad-tech is painfully simple. The entire industry relies purely on client-side JavaScript execution to verify an impression. If the viewability tag fires and the math checks out, the DSP logs a win.

The publisher gets paid. Bot syndicates realized this. They built infrastructure exclusively to execute those exact JavaScript payloads.

If you want to survive, you have to start reverse-engineering bot infrastructure from the ground up.

They do not need a human looking at a screen. They just need a headless V8 engine. It parses the ad-tech SDKs, loads the iframes, and triggers the IntersectionObserver API.

The ad renders in the dark. Your budget drains.

The Dark Ages: cURL, Python, and the API Scraping Era

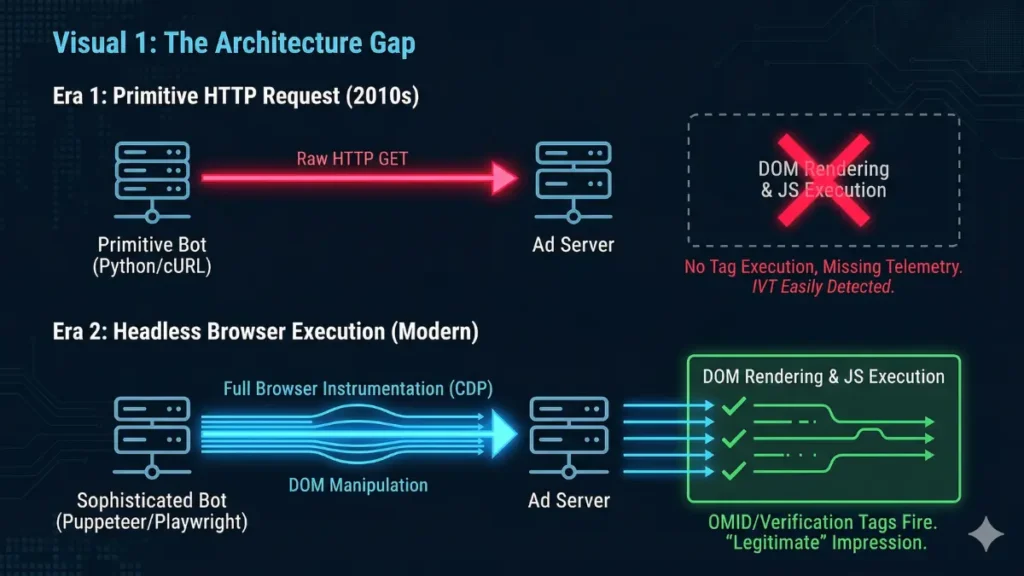

The early 2010s ad fraud model was laughably primitive, with attackers relying on simple Python requests libraries or bash scripts to execute raw cURL commands.

They hammered ad server endpoints with HTTP GET requests to inflate impression counts through pure, dumb volume.

However, this brute-force approach had a fatal flaw: raw HTTP requests do not render the Document Object Model (DOM). They merely pull the HTML payload, drop a cookie, and immediately sever the TCP connection without executing a single line of JavaScript.

No pixels fire. Blue teams crushed this tier of fraud with elementary log-level analysis. The fingerprints were massive, glowing red flags.

Any junior analyst could spot them:

- Zero JavaScript Execution: The client never actually requested the accompanying

.jsverification payload. - Header Discrepancies: HTTP requests routinely lacked basic

RefererorOriginheaders. - Botched Signatures: They consistently passed blank or malformed

Accept-Languagefields. - Static User-Agents: Millions of requests shared the exact same default

python-requests/2.25.1UA string.

It was a turkey shoot. We blocked entire datacenter ASNs and moved on. But the syndicates adapted.

The Chromium Renaissance: Weaponizing Puppeteer and Playwright

Fraudsters didn’t just iterate; they abandoned Python entirely in favor of full-fledged, weaponized browser automation frameworks like Puppeteer, Playwright, and Selenium.

These are not simple scrapers. They are professional-grade instrumentation tools possessing the exact same rendering capabilities as the Chrome browser currently sitting on your desktop.

The 3ve botnet syndicate pioneered this evolution. 3ve transitioned from basic data-center scripts to hijacking millions of residential IP addresses via Kovter malware. This malware secretly installed and ran hidden Chromium instances on infected machines.

The impact on telemetry was catastrophic. We saw a sudden 400% spike in CTR from residential IP blocks. Synthetic traffic started passing IAS and Moat viewability checks with 100% render rates.

Bot traffic was suddenly passing reCAPTCHA v3 tests with human-level 0.9 scores. Fraudsters weren’t faking JavaScript execution anymore. They were actually doing it.

Puppeteer communicates directly with the browser via the Chrome DevTools Protocol (CDP). They parse the DOM, load nested iframes, and execute complex SDKs like OMID. The pixel fires inside a real V8 engine, but there is zero human intent.

Fingerprinting the Puppeteer Payload

Spinning up a headless browser is trivial. Bot operators deploy a ten-line Node.js script via Docker and launch thousands of concurrent Chromium instances. But raw Puppeteer is incredibly noisy.

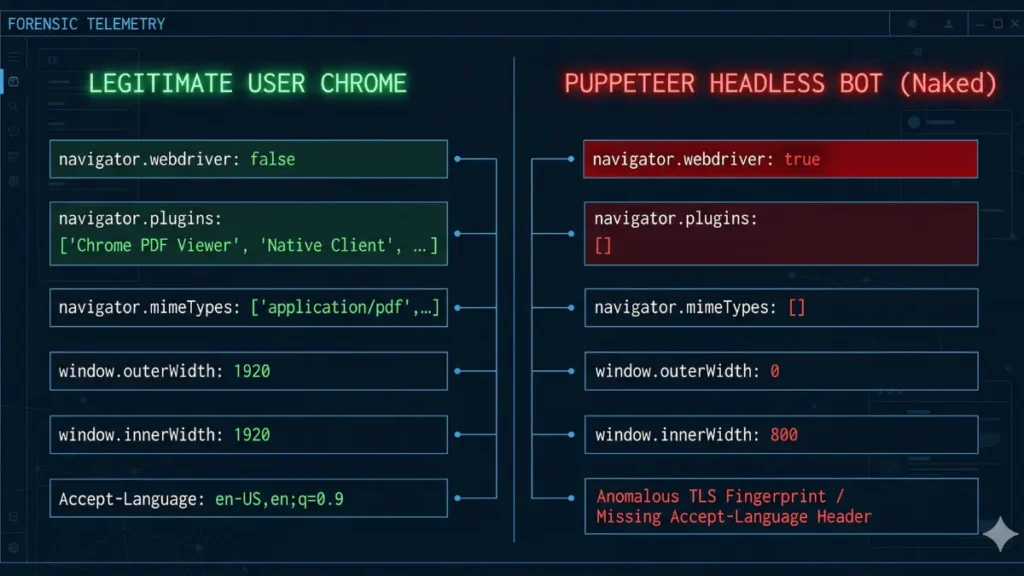

Out of the box, it bleeds technical indicators that scream automation. We ignore the programmatic supply chain fluff. We look past the network layer directly into the JavaScript engine.

[FORENSIC EXTRACT: NAKED PUPPETEER PAYLOAD DETECTED]

{

"user_agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) HeadlessChrome/119.0.6045.105 Safari/537.36",

"navigator.webdriver": true,

"navigator.plugins.length": 0,

"navigator.mimeTypes.length": 0,

"window.outerWidth": 0,

"window.outerHeight": 0,

"ja3_hash": "a0e9f5d64349fb13191bc781f81f42e1",

"tls_anomaly": "Missing GREASE extensions"

}

// ACTION: FLAG AS SYNTHETICHere is what a naked Puppeteer payload looks like under the forensic microscope:

- The WebDriver Flag: Chromium rigidly sets

navigator.webdriver = truein headless mode. It is a massive alarm bell. - Plugin Starvation: Headless instances return completely empty arrays for both

navigator.pluginsandnavigator.mimeTypes. - Resolution Mismatches: If

window.outerWidthequals 0, or severely mismatcheswindow.innerWidth, you are rendering ads on a phantom screen. - TLS and Header Anomalies: We routinely see JA3 hash mismatches and dropped

Accept-Languageheaders in the initial navigation request.

Fraudsters know this. They aggressively spoof these properties using libraries like puppeteer-extra-plugin-stealth. The arms race immediately shifts from basic header filtering to deep runtime forensics.

Tarpitting Headless Execution

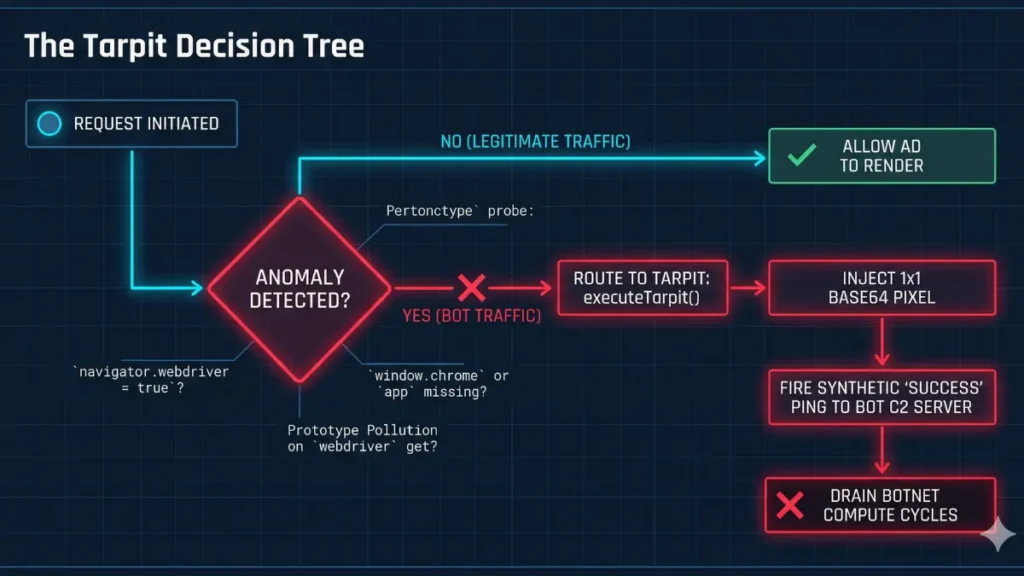

Ad-tech vendors sell you expensive black boxes analyzing network packets. They look in the wrong place. We analyze the JavaScript runtime environment.

You cannot fake a V8 engine perfectly. Vendors sleep. We build traps.

Elite syndicates use customized stealth plugins to patch specific leaks via prototype pollution. They overwrite native JavaScript functions to lie to our detection scripts. We need to detect the lie itself.

If you just drop the connection, the bot’s C2 server logs a timeout. They realize they are caught, rotate the IP, and hit you again. We want to bleed their infrastructure budget.

We build a tarpit. We overwrite the ad slot with a 1×1 transparent pixel but let the bot’s telemetry script fire a synthetic “Success” ping. They consume their own compute cycles rendering empty air.

Here is your deployable tarpit payload. Drop this directly above your primary viewability tags.

Force the botnet to waste bandwidth. Let their internal metrics report a flawless victory while you protect the budget at the client level.