The programmatic supply chain is built on a very expensive lie. Ad-tech vendors are happily collecting their rev-share while bots siphon billions from your media budgets. You are buying ghosts.

We pretend that tracking a user’s browser fingerprint is the ultimate defense against ad fraud. It is not. Fraud engineers are laughing at our collective reliance on easily spoofed client-side metrics.

The Verification Illusion: Relying on Pixel Dust

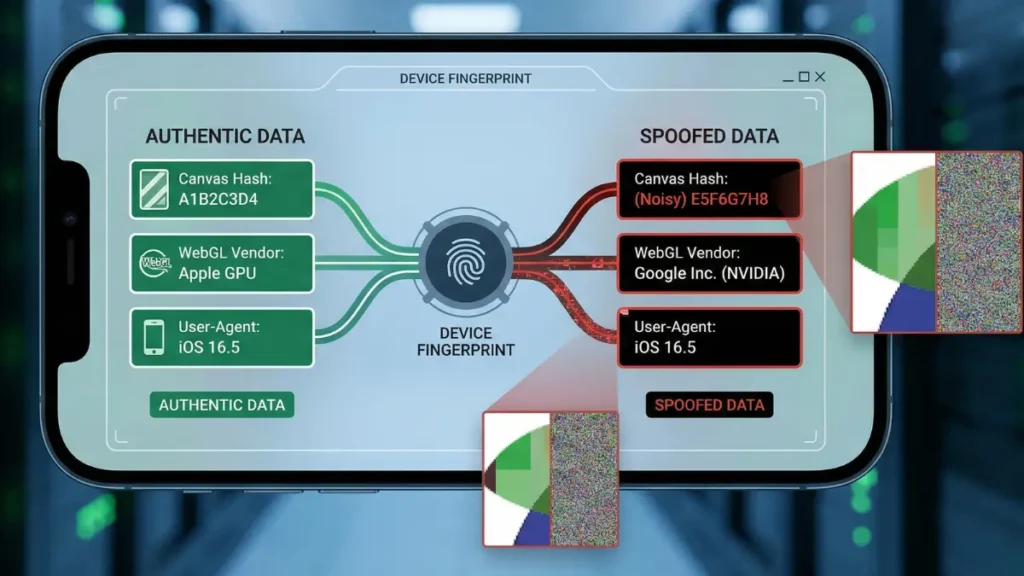

Most ad-tech vendors sell “device intelligence” as a silver bullet. They assume a unique Canvas hash equates to a breathing human. Fraudsters know this. They exploit it daily.

The legacy logic relies on tiny hardware discrepancies. Anti-fraud scripts force the browser to draw something invisible.

- The Render: A script injects a hidden

<canvas>element into the DOM. - The Execution: It draws a complex 2D text string or a 3D WebGL shape with specific blending modes.

- The Extraction: The anti-fraud tag reads the output via

toDataURL()orgetImageData(). - The Hash: The raw pixel buffer is cryptographically hashed into a supposedly unique identifier.

Minor variations in GPU architecture, OS rendering engines, and anti-aliasing algorithms create microscopic pixel differences. The vendor records the hash. They flag the session as legitimate. They bill you for the impression.

It is a beautiful theory. It is also completely broken.

We treat hardware rendering as an immutable physical law. It isn’t. It is just another JavaScript API waiting to be intercepted by a headless automation framework.

Injecting the Noise: Poisoning the Canvas API

This is where the fraud engineer earns their paycheck. They do not block the canvas fingerprinting scripts. That would trigger a massive red flag in any decent verification vendor’s dashboard.

Instead, they poison the well. Headless frameworks like Puppeteer and Playwright execute prototype pollution before your page even loads. They intercept the raw image buffer directly at the API level.

The exploit relies on rewriting native JavaScript functions:

- The Hijack: Bots overwrite

HTMLCanvasElement.prototype.toDataURLandgetImageData. - The Math: They generate a cryptographic offset for the RGB values.

- The Injection: This microscopic noise is applied to random pixels in the canvas output before the verification vendor hashes it.

It is algorithmic forgery at scale. To the verification script, the modified pixel buffer generates a perfectly unique, seemingly valid hash. The botnet just printed a brand-new, highly targeted device identity out of thin air. You pay for the impression.

Spoofing the Silicon: WebGL Vendor Forgery

A scraped $5 Linux server cannot bid on premium programmatic inventory. It needs to look like a $2,000 MacBook Pro to clear the CPM floors. The disguise requires hardware-level spoofing.

We are looking at the blatant exploitation of the WEBGL_debug_renderer_info extension. Fraudsters intercept the browser’s native queries to UNMASKED_VENDOR_WEBGL and UNMASKED_RENDERER_WEBGL.

They swap out the truth. The generic Mesa drivers or headless SwiftShader engines are entirely overwritten in the DOM.

- Linux X11 environments suddenly report

Apple GPU. - Headless Chrome instances broadcast

Google Inc. (NVIDIA). - These spoofed strings easily bypass basic deterministic blocking lists.

It is a trivial string replacement. Yet, it consistently fools legacy anti-fraud SDKs that blindly trust client-side hardware telemetry.

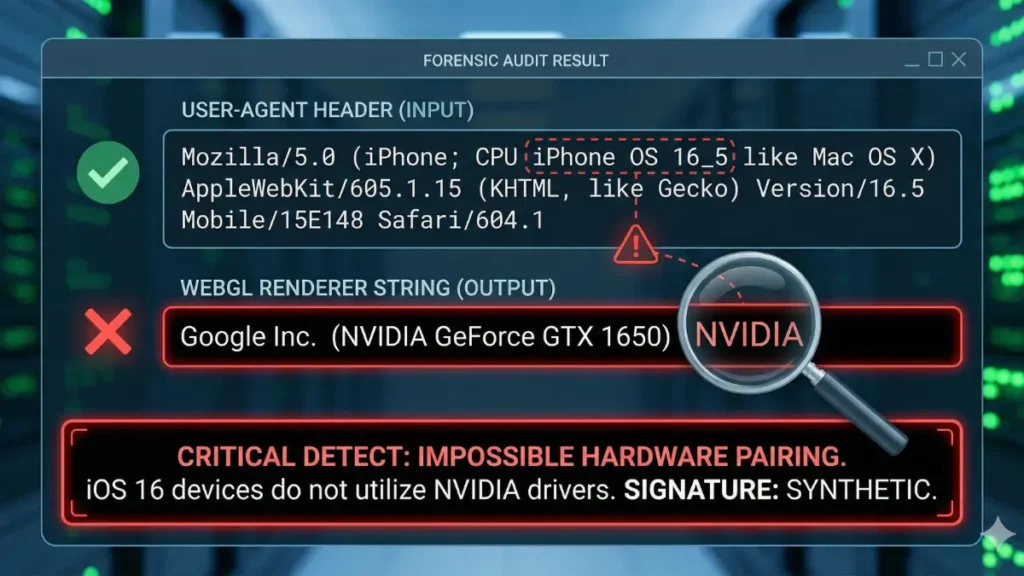

Case Study: The “Frankenstein” GPU Exploit

Let’s look at the log-level data from a recent botnet mimicking residential traffic. This operation shared architectural DNA with the 3ve botnet. They were aggressively targeting high-CPM video inventory via arbitrage sites.

The metrics were terrifyingly clean on the surface. The operation generated 250,000 synthetic bid requests in under an hour. They achieved a flawless 99.8% unique Canvas hash rate.

Network telemetry looked human. Latency drops remained perfectly consistent, hovering below 15ms. But the fraudsters got lazy with their parameter matrices.

We caught the anomaly in the WebGL fingerprinting cross-check. The botnet was passing iOS 16 User-Agents alongside Google Inc. (NVIDIA) WebGL vendor strings.

- The spoofed data bypassed verification and flowed straight into the OpenRTB

device.makeanddevice.osobject fields. - Apple silicon does not run NVIDIA drivers.

- This Frankenstein hardware signature exposed the entire residential proxy cluster.

[THREAT INTEL: HARDWARE SPOOFING ANOMALY - LLD EXTRACT]

{

"timestamp": "2025-11-28T14:02:11.452Z",

"ip_asn": "AS7922 (Comcast Cable Communications, LLC)",

"http_user_agent": "Mozilla/5.0 (iPhone; CPU iPhone OS 16_5 like Mac OS X) AppleWebKit/605.1.15...",

"webgl_vendor_unmasked": "Google Inc. (NVIDIA)",

"webgl_renderer_unmasked": "ANGLE (NVIDIA, NVIDIA GeForce RTX 3060 Direct3D11 vs_5_0 ps_5_0, D3D11)",

"canvas_hash_unique": true,

"calculated_latency": "14.2ms"

}

// CRITICAL ERROR: NVIDIA DRIVERS DETECTED ON PURPORTED iOS 16 DEVICE.

// ACTION: BLACKHOLE TRAFFICWe burned the IPs and blackholed the traffic.

Detecting Native Function Tampering

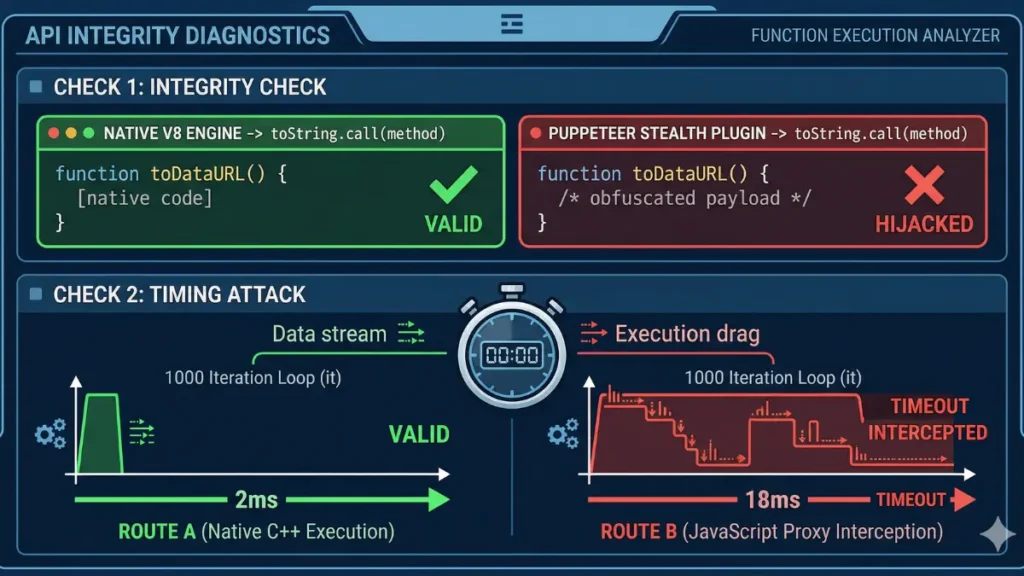

Stop trusting the hash. If the pixel buffer is poisoned upstream, your cryptographic checksum is worthless. You must verify the integrity of the rendering engine itself.

Fraudsters use JavaScript Proxies to intercept Canvas APIs and inject their noise. This interception costs CPU cycles. We exploit that latency.

They also try to hide their tracks by patching Function.prototype.toString to make their hooked functions look native. We look right past the patch. We force the browser to reveal if the function is mathematically pure or hijacked by a stealth plugin.

This execution environment must wrap your Prebid.js header bidding payload. If the verification fails, kill the auction call entirely. Do not let the bid reach the SSP.

Here is your baseline native integrity killswitch. Deploy this before you fire a single programmatic bid request.

If this script triggers, the session is fully compromised. The “user” is a headless Chrome instance running on a rented datacenter server or a hijacked residential proxy—standard components of modern bot infrastructure.

Do not flag the traffic for review. Do not pass the data to your CRM. Drop the hammer. Burn the session instantly. Stop buying ghosts.